Abstract

I just set up a lot of Onion Services for many of Debian's static websites.

You can find the entire list of services on onion.debian.org.

More might come in the future.

Read more about this on either Bits or on the Tor Blog.

-- Peter Palfrader

Abstract

I recently moved our primary nameserver from orff.debian.org, which is

an aging blade in Greece, to a VM on one of our ganeti clusters. In the

process, I rediscovered a lot about our DNS infrastructure. In this post,

I will describe the many sources of information and how they all come

together.

Introduction

The Domain Name System is the hierarchical database and query protocol that is in use on the Internet today to map hostnames to IP addresses, to map the reverse thereof, to lookup relevant servers for certain services such as mail, and a gazillion other things. Management and authority in the DNS is split into different zones, subtrees of the global tree of domain names.

Debian currently has a bit over a score of zones. The two most

prominents clearly are debian.org and

debian.net. The rest is made up of debian

domains in various other top level domains and reverse zones, which

are utilized in IP address to hostname mappings.

Types and sources of information

The data we put into DNS comes from a wide range of different systems:

- Classical zonefiles maintained in git. This represents

the core of our domain data. It maps services like

blends.debian.orgtostatic.debian.orgor specifies the servers responsible for accepting mail to@debian.orgaddresses. It also is where all theftp.CC.debian.orgentries are kept and maintained together with the mirror team. - Information about

debian.orghosts, such asmaster, is maintained in Debian's userdir LDAP, queryable using LDAP[^ldap].- This includes first and foremost the host's IP addresses (v4 and v6).

- Additionally, we store the server responsible for receiving a host's

mail in LDAP (

mXRecordLDAP attribute, DNSMXrecord type). - LDAP also has some specs on computers, which we put into each host's

HINFOrecord, mainly because we can and we are old-school. - Last but not least, LDAP also has each host's public ssh key, which we extract into SSHFP records for DNS.

- LDAP also has per-user information. Users of debian infrastructure

can attach limited DNS elements as

dnsZoneEntryattributes to their user[^ldap2]. - The auto-dns system (more on that below).

- Our puppet also is a source of DNS information. Currently it

generates only the

TLSArecords that enable clients to securely authenticate certificates used for mail and HTTPS, similar to howSSHFPworks for authenticating ssh host keys.

Debian's auto-dns and geo setup

We try to provide the best service we can. As such, our goal is that,

for instance, user access to www or bugs should always

work. These services are, thus, provided by more than one machine on

the Internet.

However, HTTP did not specify a requirement for clients to re-try a different server if one of those in a set is unavailable. This means for us that when a host goes down, it needs to be removed from the corresponding DNS entry. Ideally, the world wouldn't have to wait for one of us to notice and react before they can have their service in a working manner.

Our solution for this is our auto-dns setup. We maintain a list of hosts that are providing a service. We monitor them closely. Whenever a server goes away or comes back we automatically rebuild the zone that contains the element.

This setup also lets us reboot servers cleanly — since one of the

things we monitor is "is there a shutdown running", we can, simply by

issuing a shutdown -r 30 kernel-update, de-rotate the machine in

question from DNS. Once the host is back it'll automatically get

re-added to the round-robin zone entry.

The auto-dns system produces two kinds of output:

- In service-mode it generates a file with just the address records

for a specific service. This snippet is then included in its zone

using a standard bind

$INCLUDEdirective. Services that work like this includebugsandstatic(service definition for static). - In zone-mode, auto-dns produces zonefiles. For each service it

produces a set of zonefiles, one for each out of a set of different

geographic regions. These individual zonefiles are then transferred

using

rsyncto our GEO-IP enabled nameservers. This enables us to give users a list ofsecuritymirrors closer to them and thus hopefully faster for them.

Tying it all together

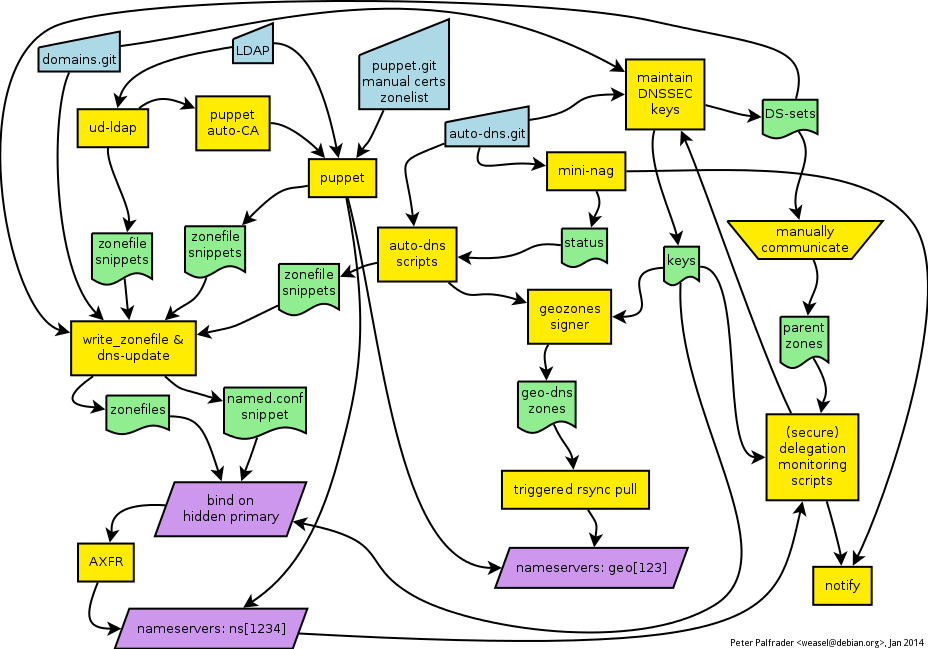

Figure 1: The Debian DNS Rube Goldberg Machine.

Once all the individual pieces of source information have been

collected, the dns-update and write_zonefile scripts from our

dns-helpers repository take over the job of building complete

zonefiles and a bind configuration snippet. Bind then loads the zones

and notifies its secondaries.

For geozones, the zonefiles are already produced by auto-dns'

build-zones and those are pulled from the geo nameservers via rsync

over ssh, after an ssh trigger.

and also DNSSEC

All of our zones are signed using DNSSEC. We have a script in

dns-helpers that produces, for all zones, a set of rolling signing

keys. For the normal zones, bind 9.9 takes care of signing them

in-process before serving the zones to its secondaries. For our

geo-zones we sign them in the classical dnssec-signzone way before

shipping them.

The secure delegation status (DS set in parent matches DNSKEY in child)

is monitored by a set of nagios tests, from both [dsa-nagios] and

dns-helpers. Of these, manage-dnssec-keys has a dual job: not

only will it warn us if an expiring key is still in the DSset, it can

also prevent it from getting expired by issuing timly updates of the

keys metadata.

Relevant Git repositories

[^ldap]: ldapsearch -h db.debian.org -x -ZZ -b dc=debian,dc=org -LLL 'host=master'

[^ldap2]: ldapsearch -h db.debian.org -x -ZZ -b dc=debian,dc=org -LLL 'dnsZoneEntry=*' dnsZoneEntry

-- Peter Palfrader

While setting up GeoDNS for parts of the debian.org zone, we set up a

new subzone security.geo.debian.org. This was mainly due to

the fact we didn't want to mess up the existing zone while experimenting

with GeoDNS.

Now that our GeoDNS setup has been working for more than half a year without any problems, we will drop this zone.

We will do that in two phases.

Phase 1

Beginning on July 1st, we will redirect all requests to

security.geo.debian.org to a static webpage indicating that

this subzone is deprecated and should not be used any more. If you

still have security.geo.debian.org in your apt sources.list, updates

will fail.

Phase 2

On August 1st, we will stop serving the subzone

security.geo.debian.org from our DNS servers.

Conclusion

In case you use a security.geo.debian.org entry in your

/etc/apt/sources.list, now is the best time to change that

entry to security.debian.org. Both zones currently serve

the same content.

We are in the process of deploying DNSSEC, the DNS Security Extensions, on the Debian zones. This means properly configured resolvers will be able to verify the authenticity of information they receive from the domain name system.

The plan is to introduce DNSSEC in several steps so that we can react to issues that arise without breaking everything at once.

We will start with serving signed debian.net and

debian.com zones. Assuming nobody complains loudly enough

the various reverse zones and finally the debian.org zone will

follow. Once all our zones are signed we will publish our trust anchors

in ISC's DLV Registry, again in

stages.

The various child zones that are handled differently from our normal

DNS infrastructure

(mirror.debian.net,

alioth,

bugs,

ftp,

packages,

security,

volatile,

www)

will follow at a later date.

We are using bind 9.6 for NSEC3 support and our fork of RIPE's DNSSEC Key Management Tools for managing our keys because we believe that it integrates nicely with our existing DNS helper scripts, at least until something better becomes available.

We will use NSEC3RSASHA1 with key sizes of 1536 bits for the KSK and 1152 bits for the ZSK. Signature validity period will most likely be four weeks, with a one week signature publication period (cf. RFC4641: DNSSEC Operational Practices).

Zone keys rollovers will happen regularly and will not be announced in any specific way. Key signing key rollovers will probably be announced on the debian-infrastructure-announce list until such time that our zones are reachable from a signed root. KSK rollovers for our own child zones (www.d.o et al.), once signed, will not be announced because we can just put proper DS records in the respective parent zone.

Until we announce the first set of trust anchors on the mailinglist the keysets present in DNS should be considered experimental. They can be changed at any time, without observing standard rollover practices.

Please direct questions or comments to either the debian-admin or, if you want a more public forum, the debian-project list at lists.debian.org.

See also:

-- Peter Palfrader

The Debian Project currently runs about 100 machines all over the world with different services. Those are mainly managed by the Debian System Administration team. For central configuration management we use Puppet. The Puppet config we use is publicly available here.

Our next goal is to have a more or less central configuration of our iptables rules on all those machines. Some of the machines have home-brewed firewall scripts, some use ferm.

Your mission, if you choose to accept it, is to provide us with a new dsa-puppet git branch with a module "ferm" that we can roll out to all our hosts.

It might want to use information from the other puppet modules like "apache2_security_mirror" or "buildd" to decide which incoming traffic should be allowed.

DSA will of course provide you with all necessary further information.

We are re-purposing raff.debian.org as a new security mirror to be shipped to South America. The raff.debian.org most of you have used as buildd.debian.org in the past will cease to exist in the near future.

raff.debian.org will cease acting as a dns server for the debian.org zone in a few days, therfore we will add two new hosts, senfl.d.o and ravel.d.o.

All other services formerly hosted on raff.d.o should have been already moved by now, we just want to encourage those of you who had a login on raff, to backup your home directory if you care of the data stored there, as we will not move that.

Current plan is to shut down current raff by end of the year (so in about 7 days).

I recently blogged about the GeoDNS setup we plan for security.debian.org. Even though all DSA team members agree that the GeoDNS setup for security.debian.org should come alive as soon as possible, we still fear to break an important service like security.d.o.

Yesterday I decided without further ado to float a trial balloon and converted DNS entries for the Debian Project homepage to our GeoDNS setup. While doing so, we found out that some part of our automatic deployment scripts still need to be adjusted to serve more than one subdomain of the project.

That setup is live for about eighteen hours now, and the project homepage now resolves it IPs via GeoDNS. For now, we are using senfl.d.o for Northern America, www.de.debian.org and www.debian.at for Europe and klecker.d.o for the rest of the world. From what I can see from GeoDNS logs, it seems to work fine, and the load stays reasonably low, so after a short test period we might add additional services like security.debian.org to GeoDNS.

DSA is currently playing around with a patched version of bind9 (based on a patch we received from kernel.org people) to implement GeoDNS for security.debian.org. You might have noticed that we currently have a round robin list of up to seven hosts in the security.debian.org rotation. Depending on time and luck your apt currently might pick a host which is located half around the globe for you, resulting in sometimes really slow download rates.

Idea

The current idea is to only present a list of security mirrors to you which are located on the continent you live on. We are aware that this won't work for all continents at the moment. For this reason we are also currently moving machines around the globe.

How to test

The easiest way for you to test is using bind9's dig command.

When trying from Germany one should get:

zobel@lunar:~% dig -ttxt +short security.geo.debian.org "Europe view"

When trying from US one should get:

zobel@gluck:~% dig -ttxt +short security.geo.debian.org "North America view"

Technique

The patch we used for bind9 uses libgeoip and MaxMind's GeoLite Country database. This patch was necessary to get bind to play nicely.

As we don't want to break security.debian.org at this stage of our testing, we decided to add a new subdomain security.geo.debian.org with which we are currently playing.

Having an ACL for EU defining all the countries belonging to the European Subcontinent, a config sniplet for security.debian.org's zone looks like this:

// Europe

acl EU {

country_AD;

country_AL;

country_AT;

country_AX;

country_BA;

country_BE;

country_BG;

country_BY;

country_CH;

country_CZ;

country_DE;

country_DK;

country_EE;

country_ES;

country_FI;

country_FO;

...

}

view "EU" {

match-clients {

EU;

};

zone "security.geo.debian.org" {

type master;

file "/etc/bind/zones/security.debian.org.EU.zone";

notify no;

};

};

To be sure we don't miss any contries, we added an additional view default, to catch what we didn't catch with the country codes:

view "other" {

match-clients { any; };

zone "security.geo.debian.org" {

type master;

file "/etc/bind/db.security.debian.org";

notify no;

};

};